Artificial intelligence chatbots have transformed from scripted, keyword-matching tools into sophisticated conversational agents that understand nuance, context, and human intent. If you’ve ever wondered how do AI chatbots work or how they manage to respond naturally to your questions, you’re not alone. In 2025, chatbots are becoming primary customer service channels, with businesses saving millions while delivering 24/7 support. The global AI chatbot market has exploded to an estimated $10–15 billion, growing at 24–30% annually. But what happens behind the scenes? How do these intelligent systems truly understand what you’re asking and formulate meaningful responses? This comprehensive guide explores the technology, machine learning algorithms, and neural network architecture that power modern AI chatbots, revealing why they’re so effective and what makes them fundamentally different from older chatbot generations.

How do AI Chatbots Work: The Complete Process

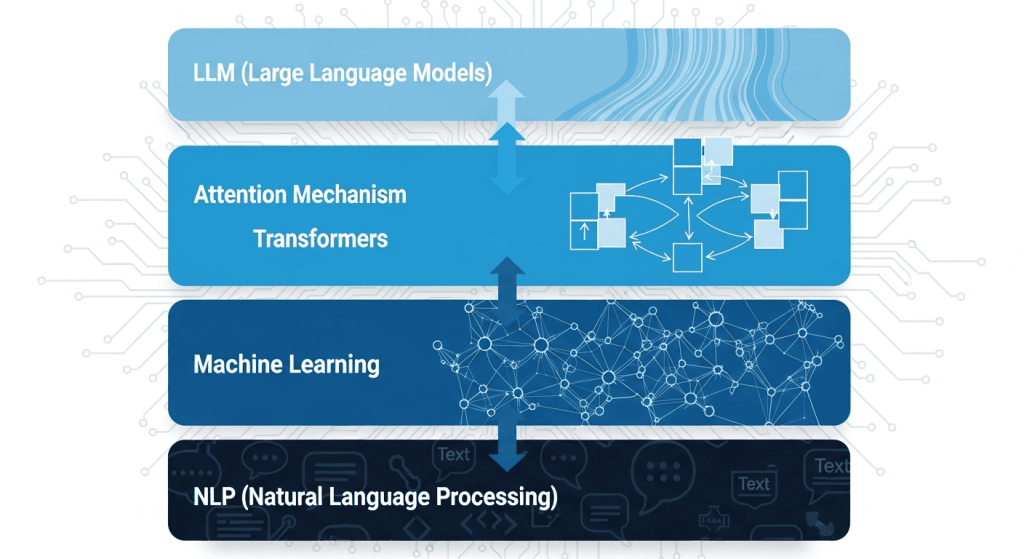

Understanding the Chatbot Architecture

Modern AI chatbots operate through an integrated system of interconnected components working in harmony. A typical AI chatbot’s architecture includes a Natural Language Understanding (NLU) engine that decodes user input into recognizable intent and entities, a dialogue manager that determines the flow of conversation, a Natural Language Generation (NLG) module that crafts coherent responses, a knowledge base that supplies content or answers, integrations with external tools like CRMs or payment systems, and a frontend interface where users interact with the system. Together, these components transform raw user input into intelligent, contextually aware responses that feel remarkably human-like.

Step 1: User Input Reception

The journey begins when a user provides input to the chatbot. This input can take multiple forms: traditional text through typing, voice commands using speech recognition technology, image uploads for visual analysis, or even video feeds for certain specialized applications. The chatbot captures this raw input and transmits it to the processing servers, where the real intelligence begins. Whether you’re typing “What’s my order status?” or speaking “Book me a flight to Tokyo,” the chatbot receives and stores your input for analysis.

Step 2: Natural Language Processing (NLP) Decodes Meaning

Once the input is received, the chatbot processes it through Natural Language Processing, the foundational technology that enables machines to understand human language. NLP is the magic layer that distinguishes modern AI chatbots from simplistic keyword-matching systems of the past. Here’s what happens during this crucial stage:

Tokenization breaks down the user’s text into smaller units called tokens—individual words, phrases, or subwords. This fragmentation helps the system analyze language structure and meaning.

Intent Recognition determines what the user actually wants. The chatbot learns to distinguish between similar-sounding requests. For instance, it understands that “Cancel my subscription” and “I want to stop my account” express the same intent: cancellation.

Entity Extraction identifies and pulls out specific, actionable data points. If you ask “Book me a hotel in Bangkok for December 15th,” the system extracts: location (Bangkok), date (December 15th), and type (hotel).

Sentiment Analysis detects the emotional tone behind your message. The chatbot recognizes whether you’re frustrated, satisfied, confused, or angry, allowing it to adjust its response tone accordingly. This is particularly valuable in customer service scenarios where emotional intelligence matters.

Context Tracking maintains memory across the conversation. If you mention an order number in message one and reference “that order” in message three, the chatbot remembers the context without you having to repeat the information.

Modern NLP systems accomplish this through sophisticated algorithms and techniques like syntactic parsing (understanding sentence structure) and semantic analysis (grasping meaning beyond individual words).

Step 3: Machine Learning and Intent Classification

After initial language processing, the chatbot applies machine learning to deepen its understanding. This is where large language models (LLMs) and deep learning come into play. Rather than relying on rigid rules, these systems have been trained on billions of text examples that teach them patterns of human language.

Intent classification leverages this training to categorize user requests into predefined goals. Is the user asking for account help, technical support, product recommendations, or something else entirely? The machine learning model assigns a probability score to each possible intent, then selects the most likely one.

Context retention in multi-turn conversations is enabled by neural networks that maintain conversation history. Modern chatbots don’t just look at the current message—they understand the full context of your conversation thread, enabling genuinely natural dialogue rather than isolated question-and-answer exchanges.

The machine learning component continuously learns from interactions. Each conversation teaches the system patterns, helping it handle more complex or unusual queries as time progresses. This adaptive learning is what transforms chatbots into increasingly intelligent systems over time.

Step 4: The Role of Transformers and Neural Networks

The architecture powering today’s most capable chatbots relies on transformer neural networks, a breakthrough technology that fundamentally changed natural language processing. Unlike older neural network designs, transformers use an “attention mechanism” that weighs the importance of each word relative to others in the conversation.

Think of it this way: in the sentence “The bank executive wanted to meet near the river bank,” a transformer can use attention to understand that the first “bank” refers to a financial institution while the second “bank” refers to a riverbank. This contextual intelligence emerges from the attention mechanism, which essentially asks: “Which parts of the previous conversation are most relevant to this response?”

Deep neural networks mimic human brain patterns to process and predict language structures. These networks consist of multiple layers, each transforming information and extracting increasingly abstract features. A word isn’t just a word to these networks—it’s converted into mathematical representations called embeddings that capture semantic meaning.

Models like GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers) are built on transformer architecture. GPT models are trained on diverse internet text, capturing patterns in billions of words, phrases, and conversations. This pre-training enables them to generate coherent, contextually appropriate responses to novel queries they’ve never explicitly seen before.

Step 5: Knowledge Base Retrieval

Before generating a response, intelligent chatbots consult their knowledge base—a repository of information specifically designed to answer common questions and handle specific scenarios. For customer service chatbots, this might include product specifications, pricing, return policies, troubleshooting guides, or FAQ documents.

The system performs a semantic search to find relevant information. Instead of keyword matching, semantic search understands meaning and context. If your knowledge base contains “Our return window is 30 days,” the system can retrieve this information when you ask “How long do I have to return this?” even though you used different words.

For cutting-edge implementations, Retrieval-Augmented Generation (RAG) combines the knowledge base with generative AI. RAG retrieves relevant documents or information in real-time, then uses that information to generate accurate, up-to-date responses. This prevents the chatbot from relying solely on training data that might be outdated. For example, a financial advisory chatbot using RAG can retrieve current stock prices rather than outdated historical data, ensuring recommendations are accurate and timely.

Step 6: Dialogue Management and Response Strategy

Dialogue management determines how the conversation should proceed. It considers the user’s intent, context, previous messages, and system capabilities to decide the best course of action. Should the system provide a direct answer? Ask clarifying questions? Escalate to a human agent? Offer multiple options?

The dialogue manager maintains a conversation state that tracks what’s been discussed, what’s been resolved, and what remains outstanding. In complex multi-turn conversations, this state management ensures the chatbot doesn’t lose track of context or repeat information.

Step 7: Response Generation

With understanding established and strategy determined, the chatbot generates its response. Modern systems typically use one of three approaches:

Templated Responses rely on pre-written answer templates that match the identified intent. For straightforward queries like “What are your business hours?” the system retrieves the appropriate template and delivers it. This approach is fast, reliable, and prevents hallucination.

Generative Responses use language models to create novel responses based on the conversation context. Rather than selecting from pre-written options, the AI generates text specifically tailored to the user’s query. This approach feels more natural for complex conversations but requires careful implementation to prevent incorrect information.

Hybrid Approaches combine both methods, using templated responses for routine queries and generative AI for complex interactions. This balances reliability with flexibility.

In voice-enabled chatbots, the text response is converted to speech using Text-to-Speech (TTS) technology, which renders written words as natural-sounding audio in various languages and voices.

Key Technologies Behind AI Chatbots

Natural Language Processing (NLP)

Natural Language Processing is the foundation of all modern chatbot intelligence. NLP algorithms process and analyze textual data, enabling chatbots to comprehend user inputs and generate contextually relevant responses. The field encompasses multiple specialized subtasks:

Natural Language Understanding (NLU) focuses on extracting meaning from text and speech, interpreting both explicit requests and implied intentions. Natural Language Generation (NLG) focuses on creating coherent, contextually appropriate responses that sound natural to human readers.

Modern NLP frameworks like spaCy and Google’s Dialogflow provide pre-built models that businesses can customize and deploy quickly. These frameworks have dramatically lowered the barrier to building sophisticated chatbots, allowing organizations without deep AI expertise to implement conversational solutions.

Machine Learning and Continuous Improvement

Unlike static, rule-based chatbots that follow rigid scripts, AI chatbots utilizing machine learning algorithms evolve with each interaction. Over time, this adaptive process results in improved accuracy at understanding complex or nuanced queries, personalized recommendations based on user history, and reduced errors as the system learns from past mistakes.

The machine learning pipeline involves several distinct phases. Data collection gathers diverse conversation examples. Data preprocessing cleans and structures this data for learning. Model training uses supervised learning (where correct answers are provided) or self-supervised learning (where the system learns patterns from raw data). Testing and validation ensure the model performs well on unseen data. Continuous improvement involves monitoring real-world performance and retraining with new data.

Large Language Models (LLMs)

Large Language Models represent a paradigm shift in AI chatbots. These neural networks, trained on hundreds of billions of words from the internet and other sources, have developed remarkable language understanding capabilities. Models like ChatGPT, Claude, and Gemini demonstrate the power of this approach—they can engage in nuanced conversations, explain complex topics, write creative content, and adapt to specialized domains.

LLMs achieve this through an approach called self-supervised learning, where the model learns patterns from unlabeled data. During training, the system predicts masked words in sentences, learning linguistic patterns and world knowledge. This pre-training creates a foundation of language understanding that can be adapted for specific applications.

The breakthrough of LLMs lies in their emergent abilities—capabilities that weren’t explicitly programmed but emerged from training on diverse data. LLMs can follow instructions, few-shot learning (learning from just a few examples), chain-of-thought reasoning (breaking complex problems into steps), and knowledge transfer (applying learning from one domain to another).

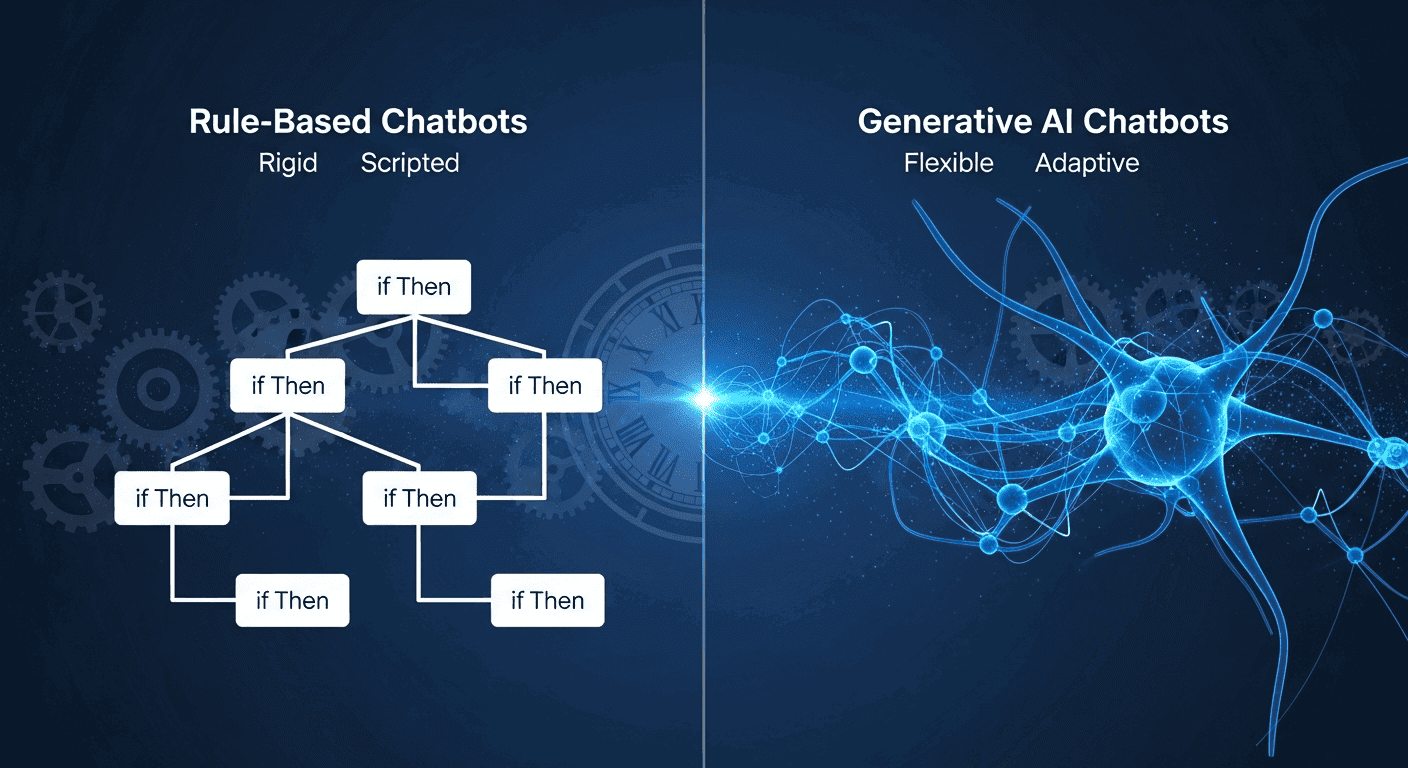

Generative AI vs. Rule-Based Chatbots: Understanding the Difference

Understanding how AI chatbots have evolved illuminates why modern systems are so effective.

Rule-Based Chatbots: The Traditional Approach

Rule-based chatbots operate using predetermined sets of conditional rules. These systems use if/then statements to verify the presence of specific keywords and deliver corresponding responses. A rule-based chatbot for customer service might use logic like: IF user input contains “order status” AND customer ID is recognized THEN retrieve and display order information.

Advantages of rule-based systems include predictability (responses are controlled and expected), cost-efficiency (simpler to build and maintain), and transparency (you know exactly how the system will respond). Disadvantages include limited flexibility (unable to handle unexpected questions), poor user experience (conversations feel scripted and restrictive), and high maintenance (adding new capabilities requires manual rule creation).

Generative AI Chatbots: The Modern Solution

Generative AI chatbots leverage large language models to create novel responses based on training data and conversation context. Rather than following rigid rules, they’ve learned patterns from billions of text examples, enabling them to generate appropriate responses for questions they’ve never explicitly encountered.

Key advantages include flexibility and adaptability (handling unexpected questions naturally), human-like conversations (responses feel natural and contextual), and scalability (handling diverse industries and use cases without redesign). Challenges include hallucination risk (occasionally generating false information), less transparency (harder to understand why specific responses were generated), and higher computational costs (requiring more processing power than rule-based systems).

The gap between these approaches is narrowing as hybrid systems combine the best of both worlds—using rule-based logic for routine queries while leveraging generative AI for complex conversations.

Training Data: Teaching Chatbots to Understand and Respond

Behind every intelligent chatbot lies massive amounts of training data. The quality and diversity of this data fundamentally determines chatbot performance.

Data Collection and Preparation

Chatbot training data comes from multiple sources: annotated conversations where humans mark important elements, real customer interactions, public datasets like Reddit discussions or movie scripts, and domain-specific documents like technical manuals or product guides.

Once collected, this data undergoes preprocessing—cleaning, structuring, and formatting for machine learning. Typos might be corrected, sensitive information redacted, and text normalized to consistent formats.

Supervised vs. Self-Supervised Learning

Supervised learning provides explicit labels and correct answers. For intent recognition, the training data consists of pairs: (user input, correct intent). The model learns to map inputs to intents. This approach requires human effort to create labeled datasets but produces reliable, focused models.

Self-supervised learning extracts patterns from unlabeled data. Large language models use this approach, where the system predicts masked words or next words in sequences. This method requires minimal human annotation and enables learning from vast internet-scale datasets.

Data Quality and Diversity

The principle of garbage in, garbage out applies critically to chatbot training. Training data should represent the actual queries the chatbot will encounter. If your customer base is multilingual, training data should reflect this diversity. If you sell winter and summer products, training data should include seasonal variations.

Data imbalance is another crucial consideration. If training data contains 1000 supportive customer messages but only 50 angry ones, the model struggles to recognize and appropriately respond to anger. Careful dataset curation ensures balanced representation across intent types and emotional tones.

Real-World Applications and Use Cases

Customer Service and Support

AI chatbots are revolutionizing customer support across industries. They handle 70% of simple inquiries autonomously, escalating complex issues to human agents. Financial services firms report 70% higher first-call resolution rates with chatbot assistance. Retailers experience up to 25% increased sales when using digital assistants for product recommendations.

The 24/7 availability of chatbots eliminates wait times and ensures customers receive immediate responses, dramatically improving satisfaction scores and reducing support costs. Major financial institutions report handling millions of customer interactions monthly through chatbots.

E-Commerce and Sales

Conversational commerce is growing rapidly, with chatbots providing product recommendations, answering sizing questions, processing orders, and even completing transactions entirely within the chat interface. Multimodal chatbots can now analyze product images uploaded by customers, identify issues, and recommend solutions—dramatically improving technical support for e-commerce businesses.

Healthcare

Healthcare chatbots assist with appointment scheduling, symptom assessment, medication reminders, and mental health support. An estimated 22% of US adults now use mental wellbeing bots for support. These applications improve accessibility to healthcare while reducing administrative burden on medical staff, which accounts for approximately 70% of potential automation in healthcare settings.

Education and Training

Intelligent tutoring systems using chatbot technology provide personalized learning experiences, answering questions, explaining concepts, and adapting difficulty levels to individual student progress. These systems democratize access to high-quality instruction, particularly benefiting students in underserved regions.

Emerging Trends: The Future of AI Chatbots

Multimodal Chatbots

Traditional chatbots were text-only. Multimodal chatbots process and understand multiple types of data simultaneously: text, voice, images, and video. This represents a fundamental shift toward more natural human-AI interaction.

For example, a multimodal customer service chatbot can:

- Analyze photos of damaged products uploaded by customers

- Listen to voice descriptions of problems

- Respond with both text and voice options

- Show relevant product images or demonstration videos

Advanced implementations like ChatGPT with Advanced Voice Mode enable fluid, natural conversations where users can interrupt mid-sentence, switch languages, and engage in nuanced dialogue.

Retrieval-Augmented Generation (RAG)

RAG represents a sophisticated hybrid approach that addresses limitations of pure generative models. Instead of relying solely on training data, RAG systems dynamically retrieve relevant information from external knowledge sources in real-time, then use that information to generate responses.

Advantages of RAG include accuracy (responses grounded in real-time data), reduced hallucination (fewer fabricated facts), up-to-date information (no need for expensive retraining), and cost efficiency (avoiding the expense of continuously retraining large models).

Example: A financial advisory chatbot using RAG retrieves current stock prices, real-time economic data, and recent market analysis before generating investment recommendations, ensuring users receive accurate, actionable advice rather than recommendations based on outdated training data.

Voice-Enabled and Conversational AI

Voice interfaces are becoming standard rather than exceptional. By 2030, the voice-enabled chatbot market is projected to reach $15.5 billion. Natural-sounding voice interactions with appropriate intonation, emotion, and accent variation are becoming industry standard.

Specialized Domain Applications

Chatbots are becoming deeply specialized for specific industries. Legal chatbots assist with contract review and research. Medical chatbots provide diagnostic support. Financial chatbots offer portfolio analysis. This specialization results in dramatically higher accuracy and utility compared to general-purpose chatbots.

Common Questions About AI Chatbots

How do AI chatbots understand what users are saying?

AI chatbots use Natural Language Processing (NLP) algorithms to break down user input into intents (goals) and entities (data points). They apply sentiment analysis to detect tone and context tracking to follow multi-turn conversations. This combination enables bots to handle varied phrasing, slang, typos, and follow-up questions with remarkable accuracy—transforming human language into structured data the system can process and respond to appropriately.

What’s the difference between ChatGPT and other AI chatbots?

ChatGPT, built on GPT-4 architecture, excels at general-purpose conversation, content generation, and reasoning across diverse topics. Other chatbots may be specialized for specific industries (medical, legal, financial), optimized for particular platforms (customer service, social media), or designed for specific capabilities (translation, code generation). ChatGPT’s main advantage is versatility and natural conversation; other chatbots often outperform it within their specialized domains.

Can AI chatbots actually learn and improve over time?

Yes, through machine learning. When properly implemented, chatbots learn from each interaction through several mechanisms: supervised feedback where human trainers correct mistakes, reinforcement learning where systems learn from reward signals, and continuous retraining where new conversation data is periodically incorporated into model updates. Over time, this makes chatbots increasingly accurate, contextually aware, and better at handling edge cases.

Why do AI chatbots sometimes give wrong answers?

Several factors contribute to hallucination or incorrect responses: knowledge cutoffs (training data only extends to a certain date), limited context (the chatbot misunderstands the question), knowledge gaps (the training data lacks information on the topic), or bias in training data (reflecting historical inaccuracies or biases). Advanced techniques like RAG mitigate these issues by grounding responses in real-time information sources.

How do chatbots handle sensitive information like passwords or payment data?

Well-designed chatbots follow strict security protocols: encryption for data in transit and at rest, PCI compliance for payment information, tokenization (replacing sensitive data with non-sensitive tokens), access controls limiting who can view sensitive data, and audit logging tracking all access. Many chatbots intentionally avoid handling highly sensitive information, instead transferring users to secured channels when necessary.

Can AI chatbots understand different languages?

Modern chatbots handle multilingual conversations effectively. Some use language detection to identify which language the user is speaking, then process accordingly. Others use multilingual language models trained on billions of words across dozens of languages, understanding patterns that transfer across languages. Advanced implementations provide real-time translation, enabling seamless communication even when the user and system use different languages.

How long does it take to build a functional AI chatbot?

Timeline depends heavily on complexity. Simple rule-based chatbots for frequently asked questions: 1-4 weeks. Generative chatbots with basic integration: 2-3 months. Enterprise-grade systems with RAG, multimodal capabilities, and complex integrations: 3-6 months. Using no-code or low-code platforms can reduce timelines significantly, though advanced customization may require additional development time.

Conclusion: The Intelligence Behind Natural Conversation

AI chatbots have evolved from simple keyword-matching scripts into genuinely intelligent conversational partners. The journey from user input to appropriate response involves sophisticated integration of multiple technologies: Natural Language Processing decodes meaning from human language, machine learning enables continuous improvement and adaptation, neural networks and transformers power contextual understanding, and knowledge bases combined with retrieval-augmented generation ground responses in accurate, current information.

The global chatbot market, now valued at $10–15 billion and growing 24–30% annually, reflects the genuine value these systems deliver. Businesses reduce support costs by millions while improving customer satisfaction. Users receive 24/7 assistance in their preferred language, across multiple input modalities, with responses tailored to their individual context.

The trajectory is clear: as transformer architectures improve, multimodal capabilities expand, and specialized applications deepen, chatbots will become increasingly indispensable. The bots your business interacts with today are smarter than last year’s and will be less capable than next year’s iterations. Understanding how these systems work—the data, algorithms, and architecture beneath the surface—prepares you to leverage them effectively and understand both their remarkable capabilities and important limitations.

Ready to explore chatbot possibilities for your business or learn more about specific implementations? Discover how enterprise chatbots can transform your customer service strategy, or dive deeper into any specific technology mentioned in this guide.